Since I’m located in the United States and speak English, I go to Microsoft’s speech service language support page and look at the en-US voices. Now that we have the SpeechConfig instance, we need to tell it which of these voices it should use for our application. These voices represent different genders, languages, and regions throughout the world and can give your applications a surprisingly human touch.

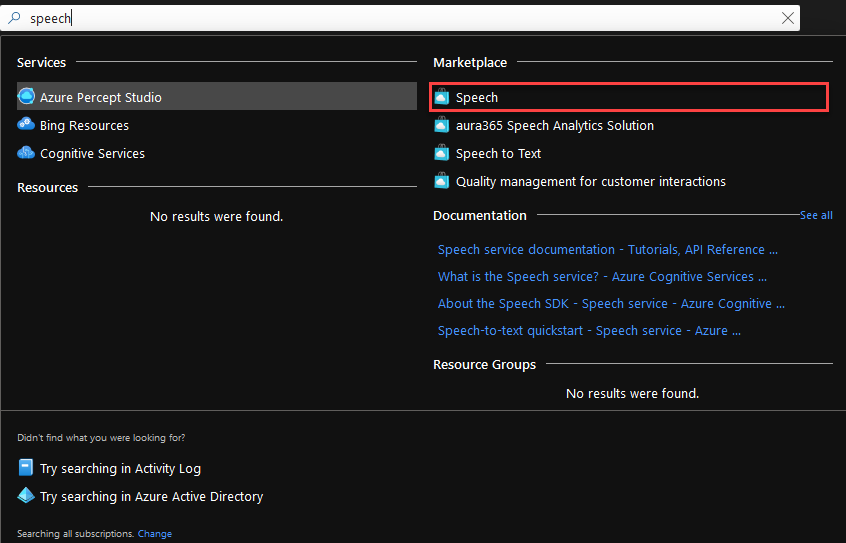

Choosing a VoiceĪzure Cognitive Services offers a wide variety of voices to use in speech. Because of this, do not check your key into source control, but rather store it in a configuration file that can be securely stored. Security Note: your subscription key is a sensitive piece of information because anyone can use that key to make requests to Azure at a pay-per-use cost model and you get the bill for their requests if you’re not careful. SpeechConfig speechConfig = SpeechConfig.FromSubscription(subscriptionKey, region) String region = "northcentralus" // use your region instead String subscriptionKey = "YourSubscriptionKey" These values should come from a config file Microsoft gives us two keys so you can swap an application between keys and then regenerate the old key to keep these credentials more secure over time.īefore you can reference these APIs in C# code, you’ll need to add a reference to using NuGet package manager or via the. It does not matter which of the two keys you use. Keys can be found on the keys and endpoints blade of your resource in the Azure portal: See my article on cognitive services for more information on when to use a computer vision resource instead of a cognitive services resource Both will have the same information available on their Keys and Endpoints blade. Note: you can either use a cognitive services resource or a speech resource for these tasks. In order to work with text to speech, you must have first created either an Azure Cognitive Services resource or a Speech resource on Azure and have access to one of its keys and the name of the region that resource was deployed to.

In this article we’ll see how text to speech is easy and affordable in Azure Cognitive Services with only a few lines of C# code. Specifically, text to speech takes a string of text and converts it to an audio waveform that can then be played or saved to disk for later playback. I expected it to improve the recognition of the added keywords, but It's not working.Text to speech, or speech synthesis, lets a computer “speak” to the user using its speakers. Var cancellation = CancellationDetails.FromResult(result) NewMessage = "NOMATCH: Speech could not be recognized." Įlse if (result.Reason = ResultReason.Canceled) NewMessage = JsonConvert.SerializeObject(result) Įlse if (result.Reason = ResultReason.NoMatch) If (result.Reason = ResultReason.RecognizedSpeech)ĬonsoleMessage = JsonConvert.SerializeObject(result.Best())

Var result = await recognizer.RecognizeOnceAsync().ConfigureAwait(false) PhraseListGrammar phraseList = PhraseListGrammar.FromRecognizer(recognizer) Using (var recognizer = new SpeechRecognizer(config)) config.SpeechRecognitionLanguage = "en-US" Ĭonfig.SpeechRecognitionLanguage = "es-ES" Ĭonfig.OutputFormat = OutputFormat.Detailed var config = SpeechConfig.FromSubscription("mysubscriptionkey", "westus2") Yesterday I was trying this and it was working like a charm when using "en-US", now it's not working so there must be something missing. So I used the PhraseListGrammar Class, and it was working well when using "en-US" as the SpeechRecognitionLanguage but when I try to do the same when using "en-ES" it just omits all the phrases added and the problem persist for all the other languages, perhaps there's something I'm doing wrong. That's why I wanted to implement the Phrase List, which it's suppose to help you improving the accuracy of speech recognition. The problem is that when I say a word, the recognizer tries to guess the word instead of even considering what I was saying, for example when saying pseudowords. I'm setting up an application for Android using Unity, where I want to apply speech to text functionalities, for that purpose I'm using the SDK of Azure's Speech-Service.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed